What is the impact of Agentic AI on Canadian businesses in 2026?

In 2026, Agentic AI transforms AI from a “tool” into a “teammate” that performs multi-step tasks autonomously. For Canadian SMBs, this means reducing manual administrative loads by up to 30% and increasing customer response speeds by 50% through self–correcting workflows that handle orders, reporting, and maintenance with minimal oversight.

The Setting: Ontario’s Shift to Autonomous Intelligence

Big Brain Way, a Toronto-based leader in digital foundations, has observed a critical shift in the local market. While generative AI was the focus of 2024, the “operational year” of 2026 belongs to Agentic AI – Systems that do more than simply record your thoughts. Instead, create plans and take action to achieve your goals.

Recent 2025/2026 data from KPMG Canada reveals that 91% of Canadian companies are already laying the groundwork for agentic systems, with 27% having fully implemented them.

Recent data from KPMG Canada for 2025/2026 indicates that 91% of Canadian businesses are actively preparing for agentic systems, with 27% having successfully implemented them in full.

The Challenge: Escaping the “Manual Trap”

Many Ontario-based retailers and professional services are currently “drowning in the manual trap.” This occurs when human staff are locked into low-value, high-volume tasks like:

- Fragmented Information Flows: Manual data entry across CRM, email, and spreadsheets.

- Delayed Response Times: Missing leads because of inconsistent follow-up.

- Reactive Maintenance: Fixing hardware or software only after it breaks, rather than predicting failure.

The Big Brain Way Approach: The 12-Month Roadmap

Big Brain Way transitions clients from a Tier 1 Digital Foundation to Tier 3 AI-Enabled Operations using a structured approach:

- Use-Case Identification: Mapping workflows to identify “nondeterministic” processes ripe for agents, such as Order Agents that handle inventory, shipping, and CRM updates without a single human click.

- The PRAL Loop: Implementing agents that perceive data, Reason through scenarios, Act on decisions, and Learn from outcomes to refine future performance.

- Human-in-the-Loop Governance: Ensuring high-risk decisions (like financial approvals) require human sign-off, while routine tasks run autonomously.

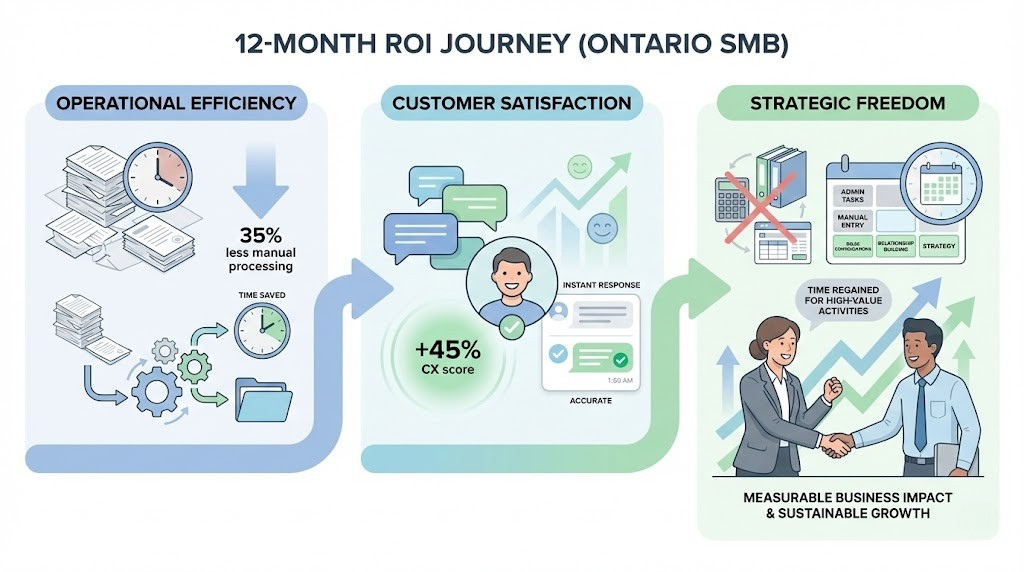

Outcomes: Measurable ROI in 2026

After 12 months of implementation, typical results for an Ontario SMB include:

- Operational Efficiency: A 35% reduction in manual processing time for complex orders.

- Customer Satisfaction: 45% improvement in CX scores due to instant, accurate agent responses.

- Strategic Freedom: Staff spend more time on high-value sales and relationship building rather than admin.

FAQ: Navigating the Agentic AI Era

Q: What is the difference between simple automation and Agentic AI?

A: Simple automation follows rigid “If-This-Then-That” rules. Agentic AI can reason, adapt to uncertain outcomes, and coordinate multiple steps to achieve a broad goal.

Q: Is my Canadian business data safe with AI agents?

A: Implementation in 2026 requires robust governance. Systems should include audit logs, data privacy guardrails, and transparency protocols so that every agent action is traceable.

Q: Why does Big Brain Way recommend Tier 1 stability before AI agents?

A: You cannot automate chaos. A solid foundation website, a verified Google Profile, and clean data are required for an AI agent to function effectively.

Q: How do AI agents improve local SEO?

A: Agents optimize “Vector Search” readiness by structuring your content for semantic retrieval, ensuring your business is the “default answer” for local search queries.

Q: What are the main barriers to adopting Agentic AI in Canada?

A: The primary barriers are data quality (cited by 71% of leaders), cybersecurity concerns, and a lack of AI literacy in the workforce.

0 Comments